New Work

The Impulse and the Response

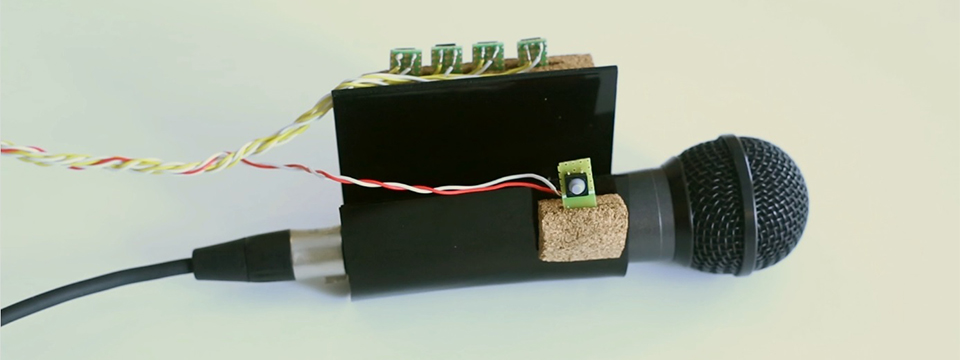

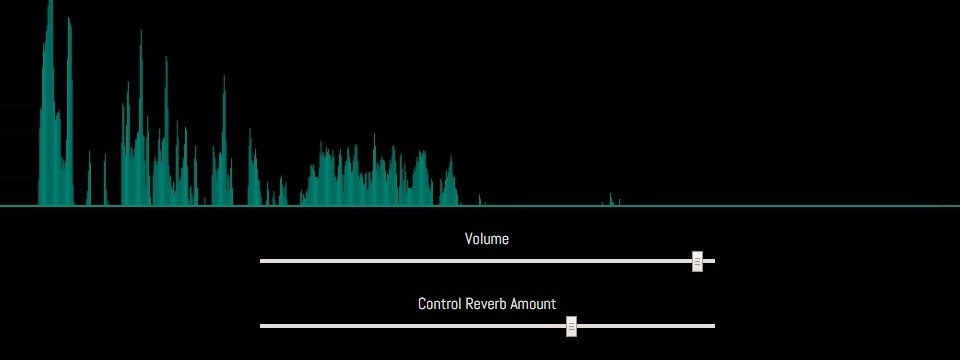

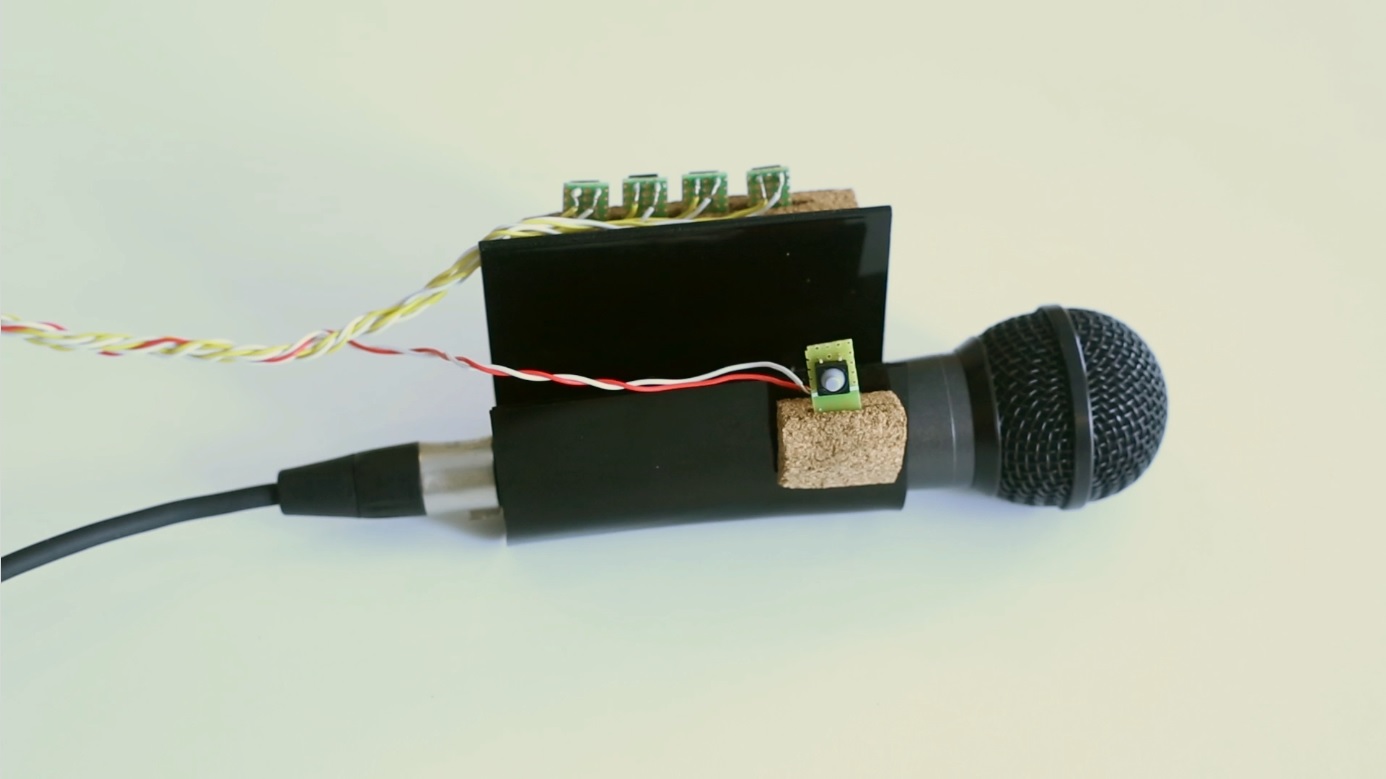

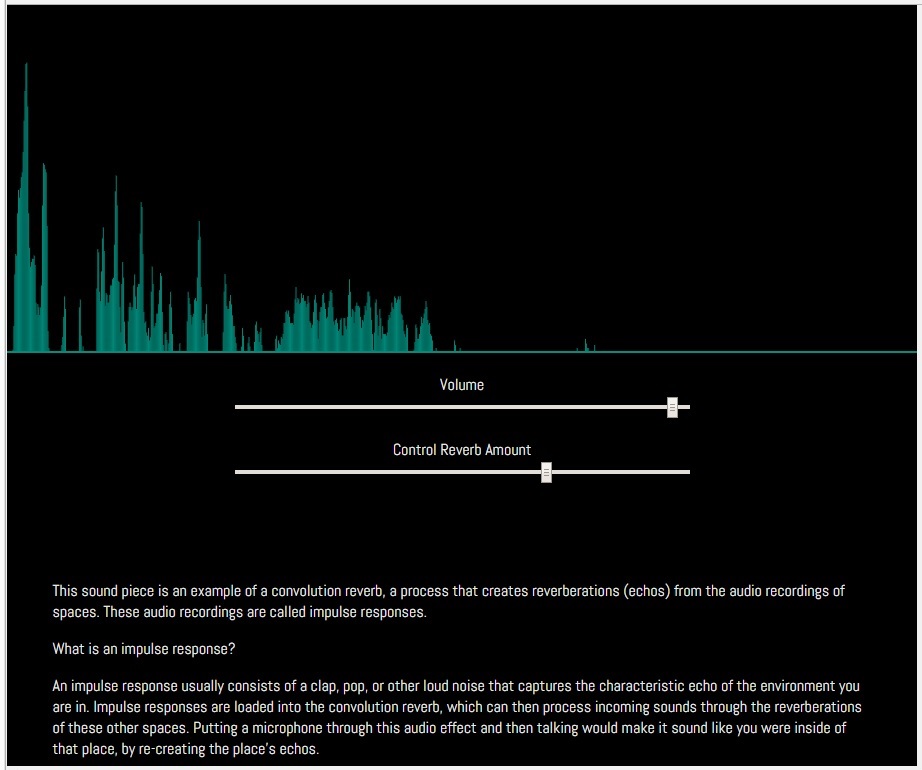

“The Impulse and the Response” is a piece consists of two interactive sound pieces to be played in the browser alongside explanatory text. Two artists, Édgar J. Ulloa and Daniela Benitez, provided audio performances. A slider is available to change the amount of reverb that affects the audio that is playing. The reverb is a re-creation of the sonic qualities of the inside of the Statue of Liberty. In collaboration, my prompt to the artists was, “If you could do a sound performance inside of the Statue of Liberty, what would it be?” The initial version of this piece can be found here: The Impulse and the Response

Traditionally, convolution reverb is used to easily recreate reverbs of sonically pleasing spaces. Even experimentally, non-traditional use of convolution reverb focuses on innovative sound design results. I was interested in the potential for political or social commentary. Both of my collaborators describe themselves as affected by the United State’s immigration policy, social attitudes towards immigration policy, and the general climate of xenophobia. They wished to make pieces addressing these issues.

Giving them the opportunity to virtually inhabit this symbolic space not only gives potential to critique the space and it’s multi-dimensional symbolism, but uses the act of recreation of the space and it’s virtualization to match the physical, psychological, and political state of flux that many immigrants can face.

A kind of technical challenge is being able to communicate the technical aspects of the piece. The artistic metaphor completely relies on the technical understanding of how a convolution reverb works. However, most official definitions of convolution reverb err on the technical side and as a result are not always helpful descriptions to the average person. With this in mind, I decided that a brief but thorough artist’s statement that explains convolution reverb was essential.

Artistically, I had struggle with how to use convolution reverb in a different context. My initial idea was about the general potential of convolution reverb as an artistic message. I had less thought about the possibilities of recreating spaces, but more of putting audio “through” another sound. Since convolution reverb works by loading other sounds, called impulse responses, to create reverberations, you can put any sound into it for unexpected results. The sound of the arctic shelf cracking and falling into the ocean could be used as an impulse response in a sound piece about global warming, for example.

After discussion the general concept with my advisor Allison Parrish, it was apparent that there was the potential for very problematic usage of this approach. Not only in the procuring of media as appropriation, but the general feeling of a serious and fatal issue boiled down into a “sound toy” that you play with. Allison’s advice to hold the technology and the message in separate spaces while meditating on potential concepts was invaluable, and lead me to the current incarnation of the project. I feel that what I have is a much more positive approach while still having a strong social message, and is empowering to artists. This revolves around the second part of my project.

The piece is a single page browser-based experience. Explanatory text guides you through what is going on in the piece and introduces you to the sound artists. However, I always intended this to be a two part project. The other half is what I've called Convolution Reverb Online.

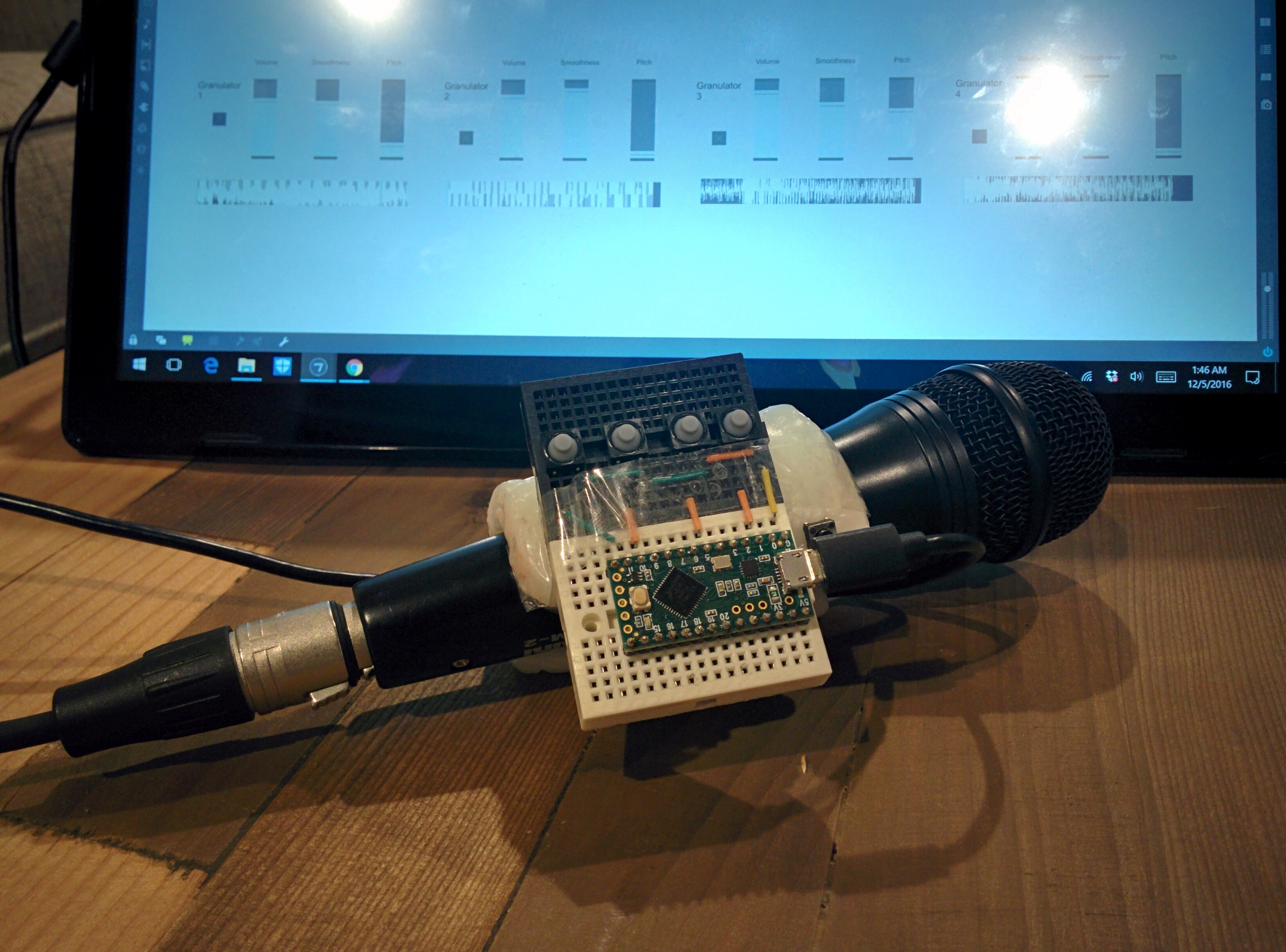

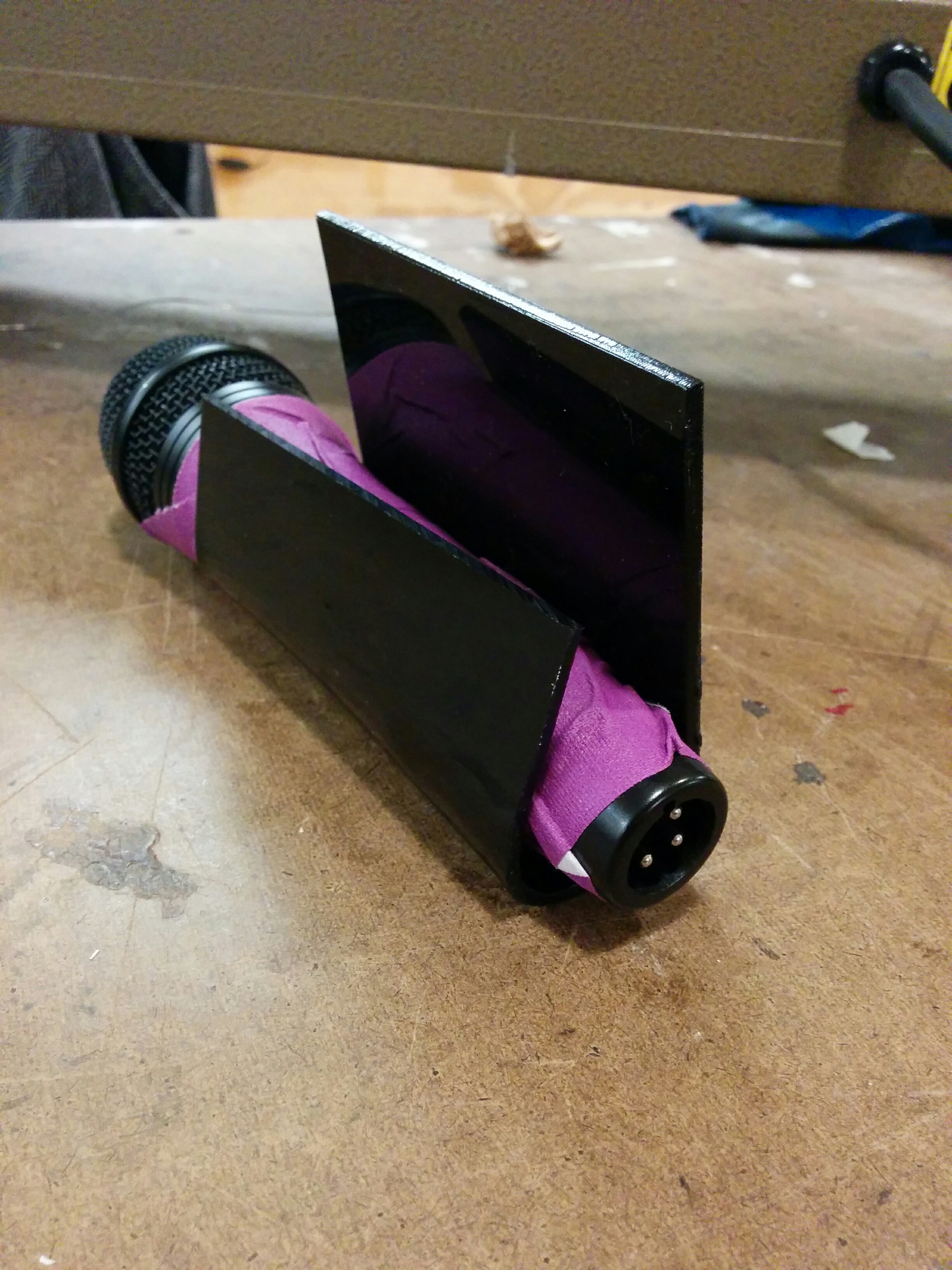

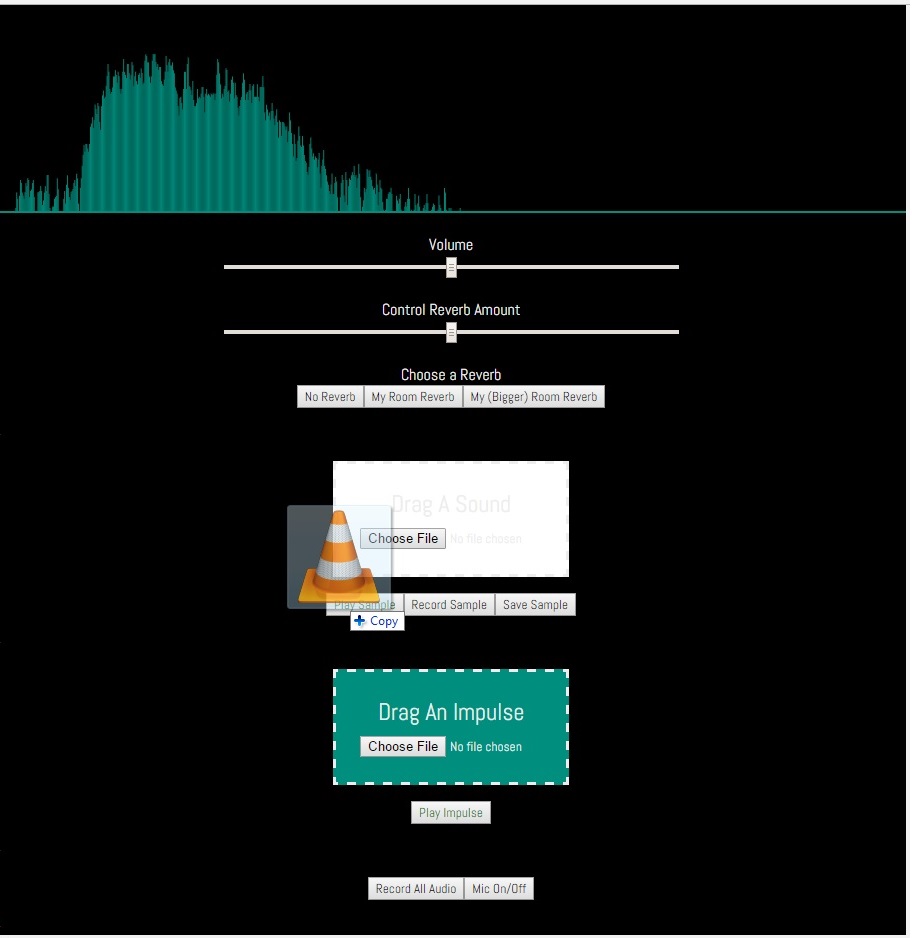

This is the prototype of a user interface that allows anyone to create their own versions of the experience offered in "The Impulse and the Response". Users are able to upload sounds or record them live. Convolution reverbs can be created by simply uploading your own sounds, without any technical knowledge needed. Convolution reverb can be applied to live or pre-recorded performances. With further development, my vision is to give artists more autonomy from technical collaborators.

While there are things to revisit on this project in terms of design, layout, and code cleanliness, I am happy with the results of this first approach. I am passionate about trying to reach new forms of artistic expression with technology, and I feel that the core ideas are facilitated by the technology successfully. Additionally, I'm happy I could implement this as a browser experience, for both the piece and the creation tool. This allows for greater reach of audience and access for artists. And again, much thanks to my collaborators Édgar J. Ulloa and Daniela Benitez.